Built to crunch through problems that would melt ordinary computers, Nasa’s new supercomputer Athena now sits at the heart of the agency’s push towards sustained missions to the Moon and, later, human footprints on Mars.

A digital brain for risky missions

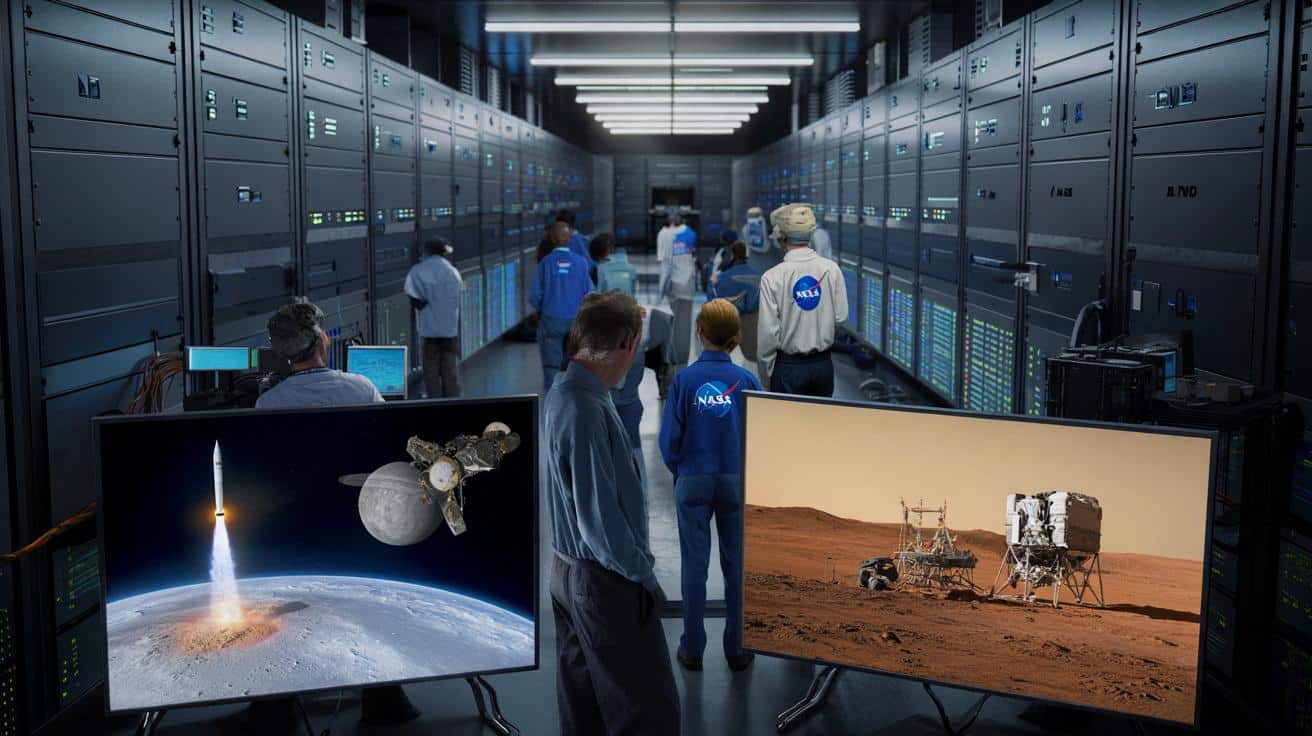

Athena, officially unveiled on 27 January 2026, runs inside Nasa’s Ames Research Center in Silicon Valley, in the Modular Supercomputing Facility. The machine reaches 20.13 petaflops of performance, meaning it can execute around 20 quadrillion calculations every second.

Athena is now Nasa’s most powerful and most efficient supercomputer ever deployed, built specifically for high‑stakes space and aeronautical missions.

This new system sits within Nasa’s High-End Computing Capability (HECC) programme, which supplies heavy-duty computing to almost every corner of the agency: rocket design, climate science, aerodynamics, planetary missions and more.

The timing matters. A 2024 report from Nasa’s Office of Inspector General warned that existing computing resources were saturated. Engineers routinely requested more compute time than the agency could provide. Older systems, based mainly on conventional CPUs, simply could not keep up with the growing volume of simulations and data analysis.

From Pleiades to Athena: a changing of the guard

Athena effectively replaces Pleiades, a veteran supercomputer that peaked at 7.09 petaflops before its retirement earlier this month. The jump in performance represents nearly a threefold boost in raw computing capacity for many Nasa workloads.

Still, Athena remains primarily a CPU-focused machine. That choice suits many physics and engineering codes still optimised for large numbers of traditional processor cores. In parallel, Nasa has upgraded its more GPU‑heavy Cabeus system with 350 Nvidia GH200 nodes, adding another 13‑plus petaflops for certain workloads.

Within HECC, Athena joins a stable of different systems, each tuned for specific tasks. Names like Aitken, Cabeus and Discover already serve researchers, but Athena acts as the new central pillar for large-scale simulation and data-heavy studies.

How 20 petaflops change daily work

To give a sense of scale, 20 petaflops means that calculations which once took days on older clusters can now finish in hours, sometimes minutes. That time compression affects real schedule decisions: whether to run one simulation or hundreds of slightly different ones.

➡️ The Emir of Qatar flies in a private jet so large it helped modernise Sardinia’s airport

➡️ Centenarian shares the daily habits behind her long life: “I refuse to end up in care”

➡️ Black Friday Decathlon: the excellent Rockrider E-EXPL 500 S drops in price by €500

➡️ Are heat pumps really too expensive and unreliable? Here’s the truth behind this “ideal” solution

- Engineers can sweep across a wider range of design options.

- Mission planners can test more “what if?” failure scenarios.

- Scientists can revisit old datasets with more detailed models.

That extra freedom often leads to better, safer designs long before real hardware leaves the factory.

Simulating a launch before the first bolt is tightened

One of Athena’s headline roles lies in advanced physical simulation. Rocket launches involve a brutal mix of heat, pressure, vibration and turbulent airflow. Each stage of a mission — ignition, lift-off, stage separation, fairing jettison, orbital insertion — must behave within tight safety margins.

With Athena, Nasa can rehearse entire launches in silico, long before a single tank is fuelled or a crew straps in.

Engineers feed Athena models of rocket structures, engines and propellant flows. The system then simulates how those parts flex, shake and interact under real conditions. A failed simulation costs only compute time. A failed launch, by contrast, can destroy a vehicle worth hundreds of millions of dollars and delay programmes by years.

The same logic extends to aviation. Nasa and its partners plan to use Athena for designing next‑generation aircraft — quieter, less polluting and more fuel‑efficient. Virtual wind tunnels running on Athena can test wing shapes, engine nacelles and fuselage layouts with fine detail, trimming the number of expensive wind-tunnel hours and full‑scale prototypes.

Artemis and the road to Mars

Athena’s influence reaches directly into the Artemis programme, Nasa’s campaign to send crews back to the Moon and build a long‑term presence there. As teams prepare for the Artemis II test flight around the Moon, the new supercomputer already runs in the background, constantly checking and refining assumptions.

Nasa engineers can, for example, simulate how the Orion spacecraft and Space Launch System rocket respond to rare but dangerous combinations of winds, engine performance shifts or guidance glitches. By scanning through thousands of scenarios, they can tune flight rules and emergency procedures long before launch day.

For Mars, the challenges grow even tougher. Simulations on Athena will help estimate how thin Martian air affects landing systems, how dust storms complicate solar power and communications, and how human habitats cope with temperature swings and radiation levels.

Athena as an engine for scientific AI

Beyond rockets and aircraft, Athena also serves as a platform for large‑scale scientific artificial intelligence. This is not about chatbots. Instead, the system trains and runs models that sift through vast collections of observational data.

Athena can scan petabytes of satellite images, atmospheric readings and mission telemetry to pick out patterns that human analysts would likely miss.

Researchers plan to use Athena for tasks such as:

- Tracking subtle climate signals in decades of Earth observation data.

- Identifying new features on planetary surfaces in high‑resolution imagery.

- Predicting the behaviour of solar storms that can disrupt satellites and power grids.

Solar weather, in particular, demands serious computing power. Simulating how the Sun’s eruptions interact with Earth’s magnetic field and upper atmosphere involves complex magnetohydrodynamic equations. Those models grow more accurate — and more computationally expensive — as scientists add detail.

Hybrid architecture: supercomputer meets cloud

Nasa is also changing how it organises computing as a whole. Athena does not stand alone like an isolated mainframe from another era. Instead, it slots into a hybrid architecture that mixes on‑premise supercomputing with commercial cloud platforms.

A researcher can keep the most intensive number‑crunching on Athena, where high‑speed networks and specialised storage systems keep data moving quickly between nodes. At the same time, less demanding tasks, temporary storage or collaborative workflows can shift to cloud services.

This flexibility helps Nasa respond when workloads spike. If a new instrument suddenly starts generating torrents of data, the agency can burst some analysis into the cloud while reserving Athena for the hardest simulations.

Open doors for the wider scientific community

Athena reached full operational status on 14 January 2026. Access does not stop at Nasa’s internal teams. External scientists attached to Nasa‑funded projects can request time on the system, subject to review.

By sharing its flagship machine, Nasa spreads the cost of the investment and speeds up research far beyond its own missions.

Projects can range from astrophysics to Earth science. A university group studying hurricane formation, for instance, might combine satellite observations and high‑resolution weather models on Athena. Planetary scientists could refine models of icy moon interiors to interpret gravity measurements from fly‑by missions.

How Athena compares to global giants

Globally, Athena does not chase the top few spots in raw performance. Machines like El Capitan at Lawrence Livermore National Laboratory operate at exascale — more than 1,000 petaflops — mainly for national security and cutting-edge physics research.

| Rank | Name | Power (EFlop/s) | Country | Site |

| 1 | El Capitan | 1.742 | United States | Lawrence Livermore National Lab |

| 2 | Frontier | 1.206 | United States | Oak Ridge National Lab |

| 3 | Aurora | 1.012 | United States | Argonne National Lab |

| 4 | Jupiter | 1.000 | Germany (EuroHPC) | Forschungszentrum Jülich |

| 5 | Eagle | ~0.793 (est.) | United States | Microsoft |

With 20 petaflops, Athena probably sits somewhere between the 100th and 200th slots in global rankings. Yet within space and aeronautics, it now leads the pack, tuned specifically for mission-critical engineering and scientific workloads rather than generic benchmarks.

What “petaflop” and “exaflop” really mean

Terms like petaflop and exaflop sound abstract, but they express a simple idea. A “flop” is one floating‑point operation per second — a basic math step involving real numbers. A petaflop equals a million billion of those operations every second. An exaflop scales that up by another factor of a thousand.

In practical terms, exascale machines can run simulations so detailed that they begin to mirror complex physical reality at fine resolution.

For Nasa, petaflop‑level machines like Athena already unlock new modelling regimes. Engineers can represent smaller turbulence structures in a rocket plume, or trace more particles in a radiation belt model, bringing simulations closer to what instruments actually measure.

Risks, benefits and future scenarios

Relying heavily on supercomputers brings both opportunities and risks. On the positive side, better simulations reduce the need for dangerous and costly physical tests. Failures can be caught on screen rather than on the launchpad. Data‑driven AI tools can flag subtle anomalies in test results before they turn into serious problems.

On the other hand, overconfidence in simulations can lead to blind spots. Models are only as good as the physics and assumptions baked into them. If a crucial effect is missing or underestimated, even the fastest supercomputer will give reassuring but misleading answers. Nasa’s engineers still need wind tunnels, test stands and flight data to validate Athena’s virtual results.

Looking ahead, Athena also acts as a pathfinder. Techniques refined on this system — hybrid workflows with the cloud, AI‑assisted design, automated scenario generation — will shape how Nasa designs spacecraft in the 2030s. By then, the agency may operate its own exascale‑class machine dedicated to human missions to Mars, with Athena remembered as the first of a new breed.

A name with a message

The team that built the system picked the name Athena in 2025, referencing the Greek goddess of wisdom and a symbolic sister to Artemis. The choice underlines how Nasa now sees computing: not just as back‑office infrastructure, but as a strategic partner in decisions that affect astronaut safety and billion‑dollar missions.

As Artemis rehearsals ramp up, Athena hums quietly in the background, checking trajectories, testing failure modes and nudging designs away from danger.

That quiet presence may never feature in launch broadcasts or mission patches. Yet if Nasa’s next chapter on the Moon and Mars plays out with fewer failures and richer science, a large share of the credit will sit inside a cooled hall at Ames, in the racks that bear the name Athena.